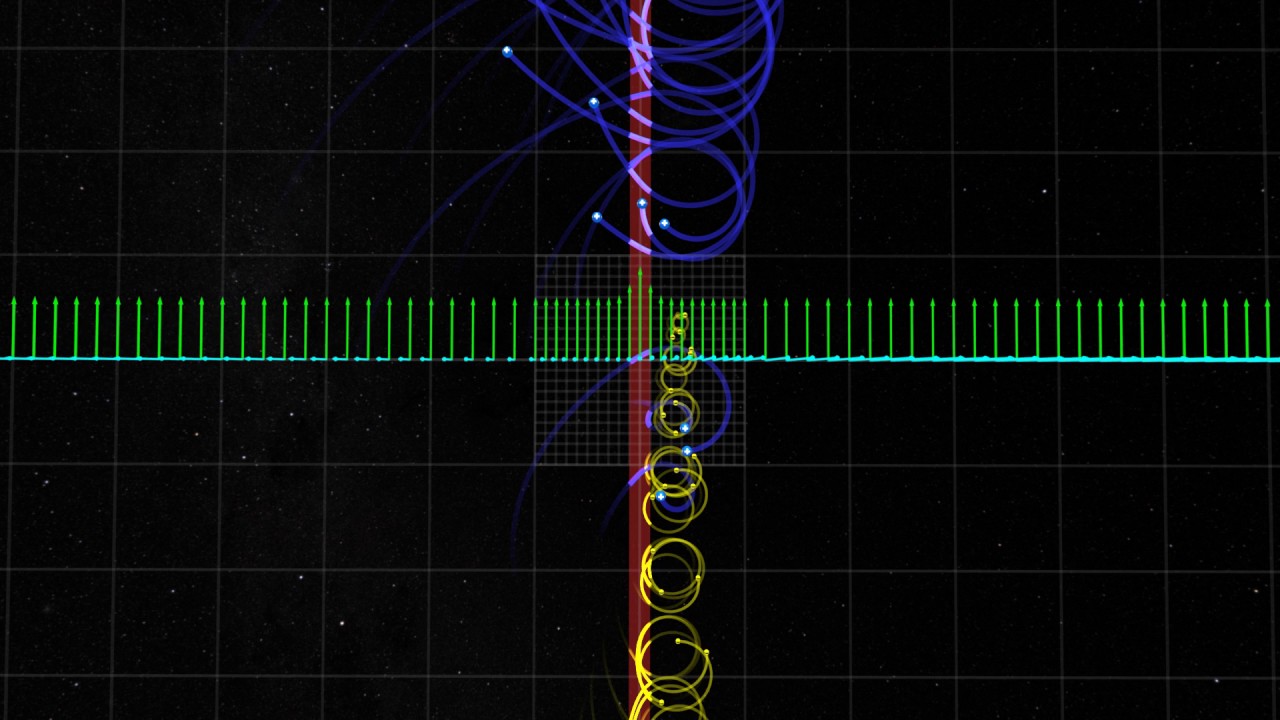

High above the surface, Earth’s magnetic field constantly deflects incoming supersonic particles from the sun. These particles are disturbed in regions just outside of Earth’s magnetic field – and some are reflected into a turbulent region called the foreshock. New observations from NASA’s THEMIS – short for Time History of Events and Macroscale Interactions during Substorms – mission show that this turbulent region can accelerate electrons up to speeds approaching the speed of light. Such extremely fast particles have been observed in near-Earth space and many other places in the universe, but the mechanisms that accelerate them have not yet been concretely understood.

The new results provide the first steps towards an answer, while opening up more questions. The research finds electrons can be accelerated to extremely high speeds in a near-Earth region farther from Earth than previously thought possible – leading to new inquiries about what causes the acceleration. These findings may change the accepted theories on how electrons can be accelerated not only in shocks near Earth, but also throughout the universe. Having a better understanding of how particles are energized will help scientists and engineers better equip spacecraft and astronauts to deal with these particles, which can cause equipment to malfunction and affect space travelers.

“This affects pretty much every field that deals with high-energy particles, from studies of cosmic rays to solar flares and coronal mass ejections, which have the potential to damage satellites and affect astronauts on expeditions to Mars,” said Lynn Wilson, lead author of the paper on these results at NASA’s Goddard Space Flight Center in Greenbelt, Maryland.

The results, published in Physical Review Letters, on Nov. 14, 2016, describe how such particles may get accelerated in specific regions just beyond Earth’s magnetic field. Typically, a particle streaming toward Earth first encounters a boundary region known as the bow shock, which forms a protective barrier between the solar wind, the continuous and varying stream of charged particles flowing from the sun, and Earth. The magnetic field in the bow shock slows the particles, causing most to be deflected away from Earth, though some are reflected back towards the sun. These reflected particles form a region of electrons and ions called the foreshock region.

Some of those particles in the foreshock region are highly energetic, fast moving electrons and ions. Historically, scientists have thought one way these particles get to such high energies is by bouncing back and forth across the bow shock, gaining a little extra energy from each collision. However, the new observations suggest the particles can also gain energy through electromagnetic activity in the foreshock region itself.

The observations that led to this discovery were taken from one of the THEMIS – short for Time History of Events and Macroscale Interactions during Substorms – mission satellites. The five THEMIS satellites circled Earth to study how the planet’s magnetosphere captured and released solar wind energy, in order to understand what initiates the geomagnetic substorms that cause aurora. The THEMIS orbits took the spacecraft across the foreshock boundary regions. The primary THEMIS mission concluded successfully in 2010 and now two of the satellites collect data in orbit around the moon.

Operating between the sun and Earth, the spacecraft found electrons accelerated to extremely high energies. The accelerated observations lasted less than a minute, but were much higher than the average energy of particles in the region, and much higher than can be explained by collisions alone. Simultaneous observations from the additional Heliophysics spacecraft, Wind and STEREO, showed no solar radio bursts or interplanetary shocks, so the high-energy electrons did not originate from solar activity.

“This is a puzzling case because we’re seeing energetic electrons where we don’t think they should be, and no model fits them,” said David Sibeck, co-author and THEMIS project scientist at NASA Goddard. “There is a gap in our knowledge, something basic is missing.”

The electrons also could not have originated from the bow shock, as had been previously thought. If the electrons were accelerated in the bow shock, they would have a preferred movement direction and location – in line with the magnetic field and moving away from the bow shock in a small, specific region. However, the observed electrons were moving in all directions, not just along magnetic field lines. Additionally, the bow shock can only produce energies at roughly one tenth of the observed electrons’ energies. Instead, the cause of the electrons’ acceleration was found to be within the foreshock region itself.

“It seems to suggest that incredibly small scale things are doing this because the large scale stuff can’t explain it,” Wilson said.

High-energy particles have been observed in the foreshock region for more than 50 years, but until now, no one had seen the high-energy electrons originate from within the foreshock region. This is partially due to the short timescale on which the electrons are accelerated, as previous observations had averaged over several minutes, which may have hidden any event. THEMIS gathers observations much more quickly, making it uniquely able to see the particles.

Next, the researchers intend to gather more observations from THEMIS to determine the specific mechanism behind the electrons’ acceleration.

Source: NASA.gov news release reused under public domain rights and in accordance with the NASA media guidelines.

Now, Check Out:

- Strange Depression on Mars Could Harbor Life

- Series of NASA CubeSat Missions Will Take a Fresh Look at Planet Earth [Video]

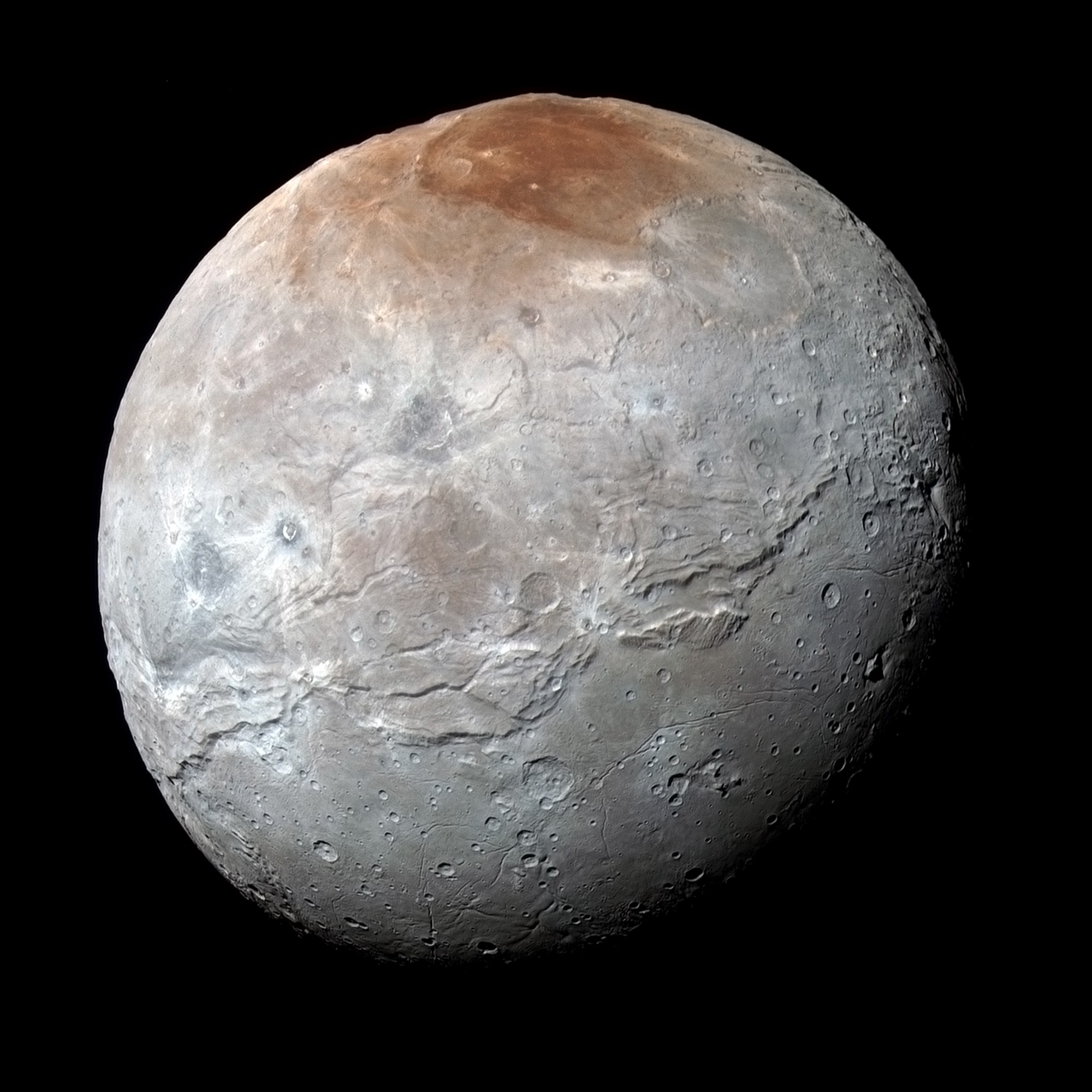

- How Pluto Spray-Paints Charon Red Like a Graffiti Artist

- Novel Perovskite Solar Cell Could Rival Silicon [Video]

- Turning diamonds’ defects into long-term 3-D data storage

![Series of NASA CubeSat Missions Will Take a Fresh Look at Planet Earth [Video]](https://sciencerocksmyworld.com/wp-content/uploads/2018/05/series-of-nasa-cubesat-missions.jpg)